Abstract

Abstract

Localization is a key task in robot navigation, and many techniques exist for it.

In many plausible scenarios, a robot might face unforeseen, dynamic obstacles, rendering any pre-determined map inaccurate for localization.

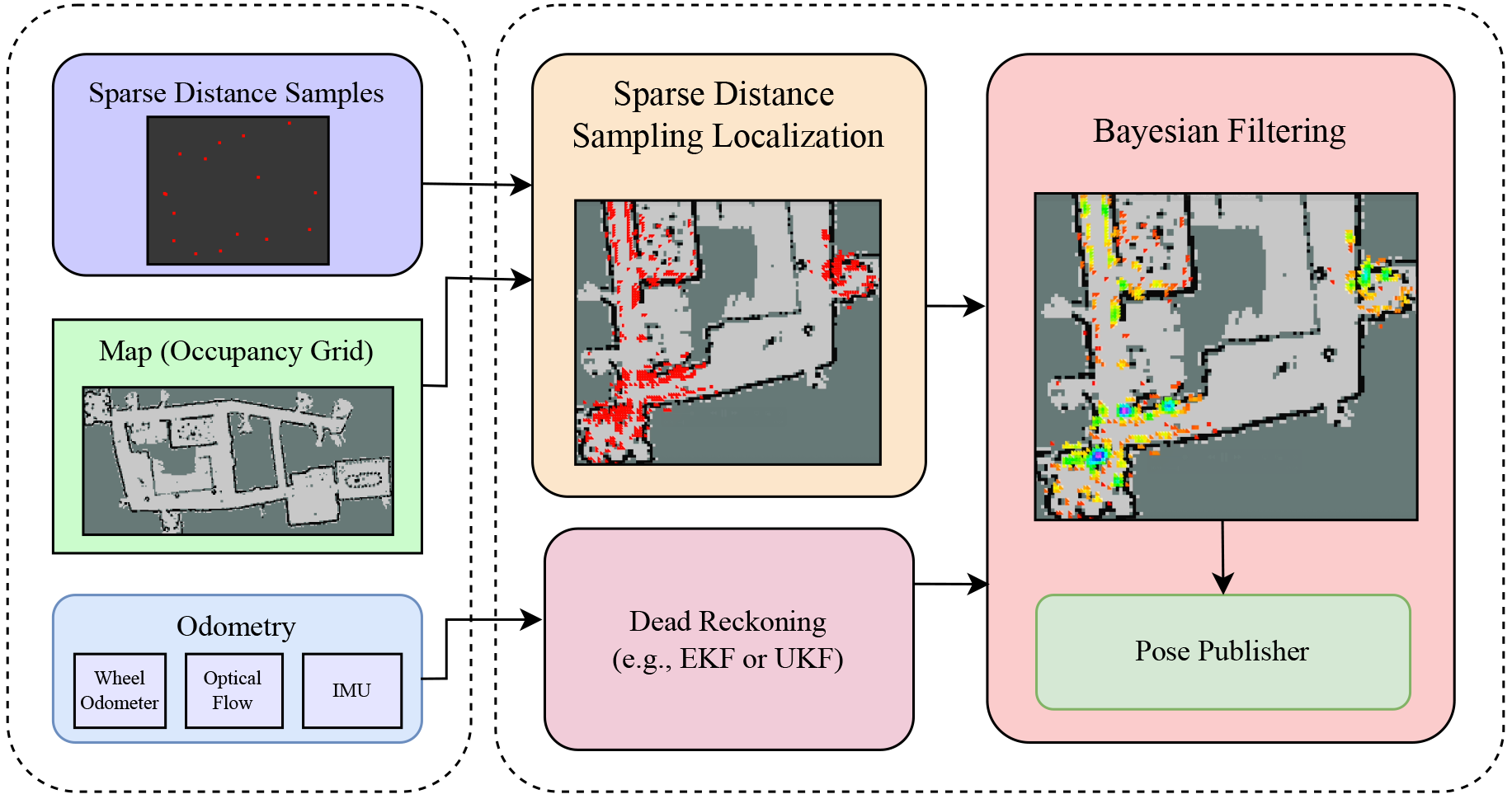

In this work, we propose a robust lifelong localization framework in dynamic planar indoor environments, using the robot’s odometry and sparse distance sampling.

We demonstrate how distance samples can be used to provide a robust prior on the robot’s location. This technique can solve the kidnapped robot problem in real time, up to symmetries. Based on insights from real-world recorded data, we also account for dynamic obstacles.

We then fuse this prior, over time, with the odometry to converge to the robot’s location.

A central property of our method is that it provably converges to the robot’s ground truth pose even in large indoor environments when the environment is static. We further show that this guarantee also holds in dynamic environments, as long as the nature of those changes has been correctlylearned.

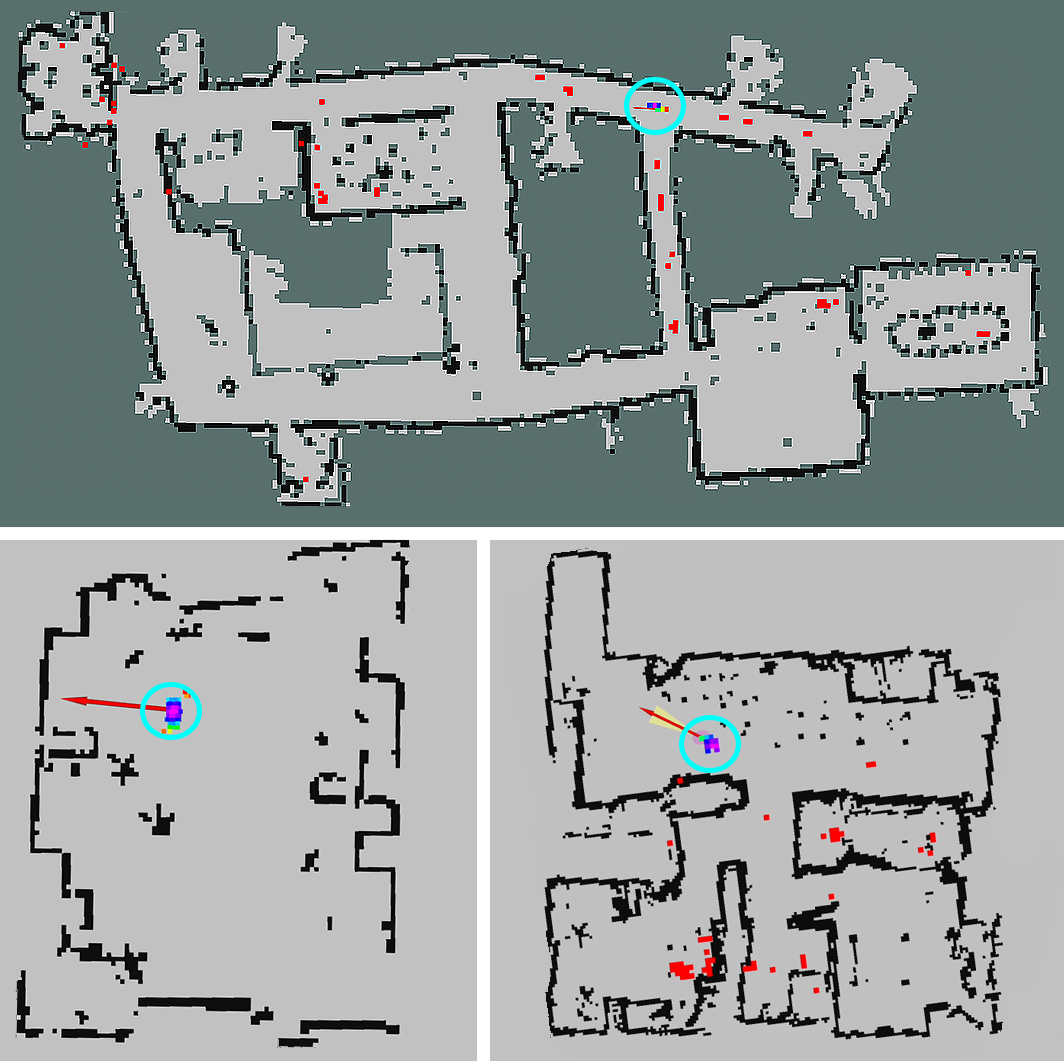

We demonstrate the effectiveness of our approach in different real-world indoor environments.

In particular, we achieve a localization comparable to SLAM with merely a few (sixteen) distance samples, as opposed to the full LiDAR range.

Sufficing with only sparse distance sampling is advantageous in terms of sensor cost, privacy, storage space, and transmission bandwidth.

Source Code

Our code is open source, and is comprised of the following modules (which are available on GitHub):

- The main repository of the SDSL algorithm (written in C++ with Python bindings): https://github.com/TAU-CGL/sdsl

- To use the SDSL method with an actual robot, we run a ROS2 node that uses the SDSL package, listens to the /sds and /odom topics and publishes localization: https://github.com/TAU-CGL/sdsl_ros2

- In our experiments we use an iRobot Create 3, equipped with a Raspberry Pi and RPLIDAR A1. Out of that full LiDAR range, we extract only k=16 range measurements and publish them in the /sds topic. This code is available in: https://github.com/TAU-CGL/sdsl_create3

- Finally, we measured the ratio between the k range measurements and the k’ measurements that actually measured the environment’s map (and not dynamic obstacles). To record this data, we have built easily-reproducible 3D printed devices, equipped with a Raspberry Pi and RPLIDAR C1 LiDARs. The source code and building instructions is publicly available at https://github.com/TAU-CGL/rplidar_recorder, and further details on the experiment can be found here.